Search Security

How do I protect my search from bots and abusive queries?

Most websites see some amount of "search noise": bots sending bursts of requests, users pasting long strings into the search box, or automated tools probing random characters and URLs.

For example, imagine your site normally gets a few hundred searches a day, but you suddenly see thousands of requests per hour from the same IP address — or queries like <<<<<<<<<<< and long garbled strings that clearly do not come from real visitors. In cases like this, it makes sense to add a few guardrails.

IP-based protections

These settings are found under Search Settings → IP Settings.

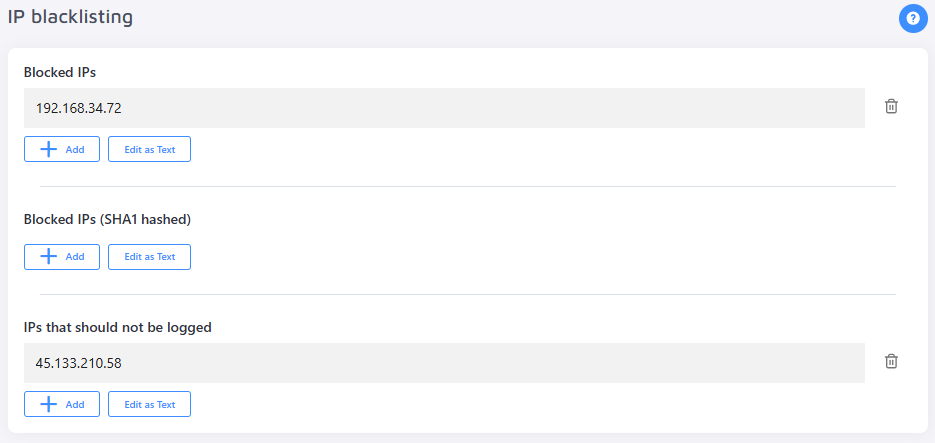

IP Blacklisting

If you can identify offending IP addresses, you can block them directly.

- Blocked IPs — blocks requests from the specified IP addresses.

- Blocked IPs (SHA1 hashed) — same as above, but accepts hashed IP values for added privacy.

- IPs that should not be logged — requests from these IPs will still be served, but will not appear in your analytics.

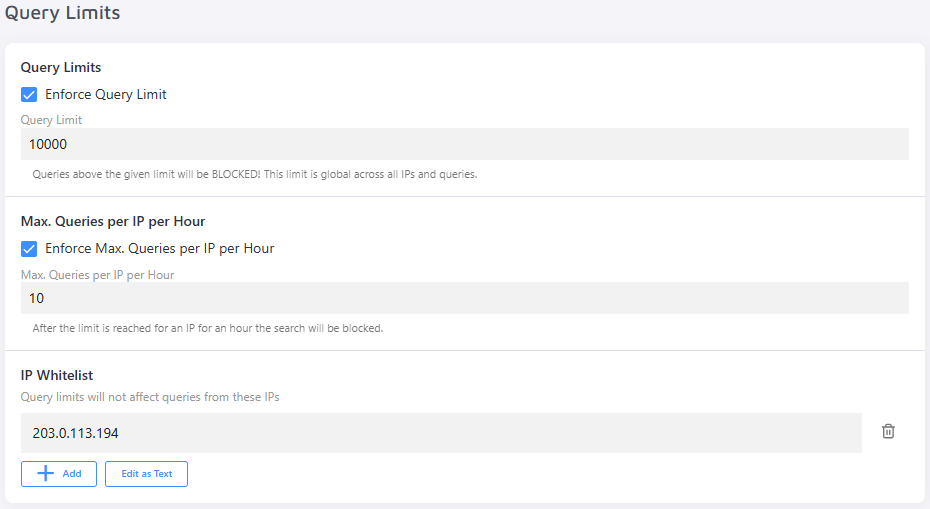

Query Limits

Use these options to cap how many searches an IP address — or your site as a whole — can perform.

- Enforce Query Limit — sets a cap on the total number of monthly queries your site will serve. To avoid unexpected overages, align this with your plan's quota.

- Max. Queries per IP per Hour — defines the per-hour request threshold for a single IP address.

- IP Whitelist — query limits will not apply to IPs added here. Use this to exempt internal or trusted IPs — such as your own team's — from being rate-limited during testing or monitoring.

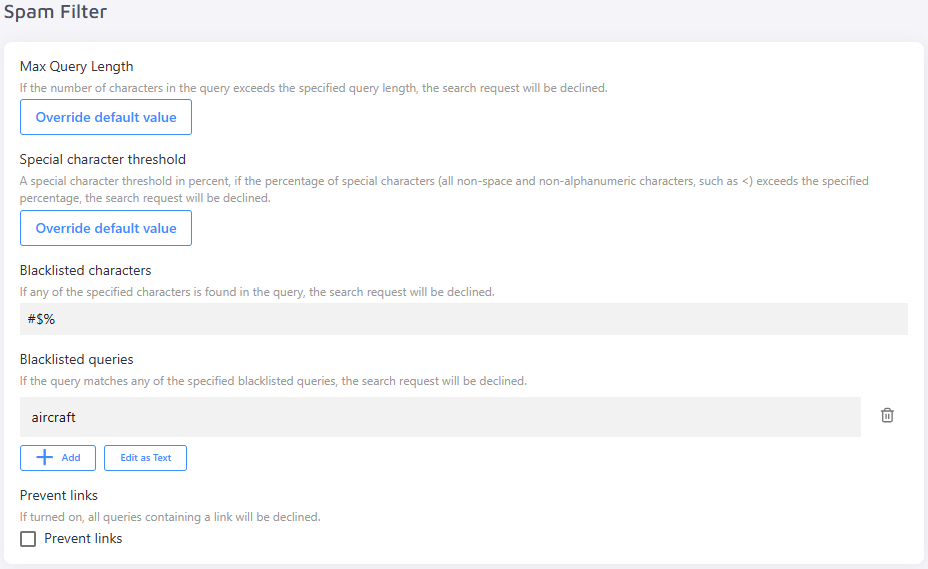

Query-based protections (Spam Filter)

These settings are found under Search Settings → General → Spam Filter.

The Spam Filter declines requests that look suspicious — useful when your traffic contains automated queries that do not resemble normal human searches.

- Max Query Length — declines any request where the query exceeds the configured character limit.

- Special Character Threshold — a percentage-based threshold. If special characters (any non-space, non-alphanumeric character, such as

<) make up more than the specified percentage of the query, the request will be declined. - Blacklisted Characters — if any of the specified characters appears in the query, the request will be declined.

- Blacklisted Queries — if the query exactly matches one of the blacklisted entries, the request will be declined.

- Prevent Links — when enabled, any query containing a URL or link will be declined.

Protecting your site from bots

Site Search 360 can help you rate limit traffic and decline suspicious queries, but it cannot stop bots from reaching your website in the first place. If you are dealing with heavy bot traffic, consider adding protection upstream — before requests ever reach your search endpoints.

Effective bot mitigation works best as a layered effort. Think of it as a funnel: the earlier you identify and block bot traffic, the less impact it has on your query quota and your users' experience. Site Search 360 sits at the end of that funnel. The measures below are outside of Site Search 360 but represent the highest-impact steps you can take.

CDN and Web Application Firewall (WAF)

A CDN or WAF sits in front of your website and can block the majority of bot traffic before it reaches your search implementation. This is the most effective single step you can take.

Cloudflare is the most widely used option, with meaningful bot protection available even on its free plan:

- Bot Fight Mode (Free) — automatically detects and challenges known bad bots.

- Rate Limiting Rules (Free and above) — cap requests per IP within a time window, targeted at your search path (e.g. paths or query strings containing

q=or/search). - Super Bot Fight Mode (Pro and above) — uses machine learning to distinguish verified crawlers from malicious bots.

- Bot Management (Enterprise) — assigns every request a bot score and lets you define rules per score range.

Further reading: Bot Fight Mode · Rate Limiting Rules · Super Bot Fight Mode · Bot Management

If you are hosted on a major cloud platform, each offers its own WAF with bot control capabilities:

Further reading: AWS WAF Bot Control · Azure Web Application Firewall · Google Cloud Armor

Server-side rate limiting

If you manage your own server, you can add rate limiting directly at the server level targeting your search endpoint. This complements the built-in Max. Queries per IP per Hour setting in Site Search 360, catching excessive traffic before it reaches our service.

For example, the following Nginx configuration limits each IP to 10 search requests per minute with a short burst allowance:

limit_req_zone $binary_remote_addr zone=search:10m rate=10r/m;

location /search {

limit_req zone=search burst=20 nodelay;

limit_req_status 429;

}

Additional server-level measures worth considering:

- User-Agent filtering — block requests from known bot signatures such as

curl,python-requests, orscrapy. - Request header validation — real browsers consistently send headers like

Accept,Accept-Language, andReferer; many bots do not. - Fail2ban — automatically bans IPs that trigger an unusual number of errors within a short time window.

Further reading: Nginx rate limiting module · Rate Limiting with Nginx · Fail2ban

CAPTCHA and challenge-response

CAPTCHA operates at the website level, not at the search endpoint itself. Tools like reCAPTCHA v3 run passively on the page, scoring each visitor's behaviour. If a visitor's score falls below your threshold — indicating likely bot activity — you can block or challenge them before a search request is ever fired.

Apply challenges selectively. Adding a visible CAPTCHA to every search interaction creates unnecessary friction for real users. The recommended approach is to trigger a challenge only when suspicious behaviour is detected, such as an unusually high query rate from a single session or a low trust score returned by your WAF.

| Solution | Notes |

|---|---|

| Cloudflare Turnstile | Free, invisible to users, straightforward to integrate |

| Google reCAPTCHA v3 | Invisible scoring; show a visible challenge only below a score threshold |

| hCaptcha | Privacy-focused alternative to reCAPTCHA |

Protect Search Endpoints

This setting addresses a potential security risk for intranet and extranet projects.

If your search endpoints are publicly accessible, anyone who knows — or can guess — a valid siteId could query the Search and Suggestion endpoints directly and retrieve indexed results. This means your content may be exposed through the API even if your website itself is not publicly accessible.

Enabling Protect Search Endpoints mitigates this risk by requiring authorization on all search and suggestion requests. You can find these options under Search Settings → General → Access Control.

These settings are available for Content Search customers only.

Require API Key Authentication

When enabled, all Search and Suggestion endpoint requests must include a valid API key.

You can pass the API key in either of the following ways:

- As a query parameter:

token=<your-api-key> - As a request header:

Authorization: <your-api-key>

The Site Search 360 JavaScript plugin is not compatible with this setting. This option is intended for custom integrations where you control the request and can attach the API key server-side.

Restrict by Allowed Host Names

Only Search and Suggestion requests originating from the specified host names will be accepted. Leave this field blank to allow requests from all host names.

This setting validates the Origin request header only. Because the Origin header can be spoofed, this should be treated as a basic restriction layer — not a security guarantee.